6 Game-Changing Things to Know About Apache Arrow Support in mssql-python

If you've ever tried to pull a million rows from SQL Server into a Python dataframe, you know the pain: a million Python objects, a million garbage-collector allocations, and then more overhead just to build the dataframe. That era is over. With the latest update to mssql-python, you can now fetch SQL Server data directly as Apache Arrow structures—a faster, leaner path for anyone working with Polars, Pandas, DuckDB, or any Arrow-native library. This feature was contributed by community developer Felix Graßl (@ffelixg), and it’s a game-changer for data pipelines. Here’s everything you need to know.

- The Python Object Overhead Problem

- What Apache Arrow Brings to the Table

- Zero-Copy Data Exchange via the Arrow C Data Interface

- Concrete Benefits: Speed, Memory, and Interoperability

- How mssql-python’s Columnar Fetch Works

- Who Built It and What’s Next

1. The Python Object Overhead Problem

Every row fetched from a traditional database driver in Python spawns a new Python object—and for each column, another object. That means a million-row, ten-column result set creates ten million individual Python objects. Each object requires memory allocation, reference counting, and eventual garbage collection. The result? High memory usage, CPU churn, and slower performance, especially with temporal types like DATETIME or DATETIMEOFFSET, where Python-per-value conversions add extra overhead. This approach also forces dataframe libraries like Polars to copy and reinterpret data, wasting cycles. The core problem: the fetch loop is bottlenecked by Python’s object model, not by I/O or database speed.

2. What Apache Arrow Brings to the Table

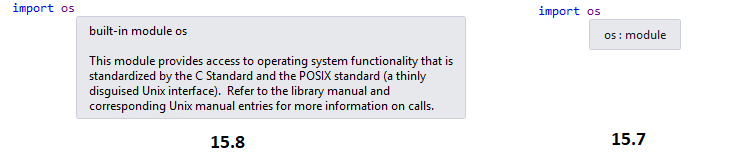

Apache Arrow flips the script with a columnar, in-memory format. Instead of storing a table as rows of Python objects, Arrow stores all values for a column contiguously in a typed buffer. Nulls are tracked via a compact bitmap, not as None objects. This design is inherently cache-friendly and allows vectorized operations. But the real magic is zero-copy language interoperability: Arrow defines a stable shared-memory layout (the Arrow C Data Interface) that any language can produce or consume by exchanging a pointer—no serialization, no copies, no re-parsing. A C++ database driver and a Python dataframe library can share the same memory without either knowing about the other.

3. Zero-Copy Data Exchange via the Arrow C Data Interface

The Arrow C Data Interface is an ABI (Application Binary Interface) specification—a binary-level contract that dictates how compiled code lays out data in memory. Two programs built in different languages can adhere to the same ABI and exchange data directly, with zero serialization overhead. For a database driver like mssql-python, this means the entire fetch loop can run in C++ and write values directly into Arrow buffers. No Python objects are created per row, and the Python side receives a simple pointer to the Arrow memory. Libraries like Polars, Pandas (via ArrowDtype), DuckDB, and even Hugging Face Datasets can consume this data instantly, without any conversion step.

4. Concrete Benefits: Speed, Memory, and Interoperability

The switch to Arrow delivers three big wins. Speed—the columnar fetch path avoids Python object creation per row, making fetching noticeably faster, especially for temporal types like DATETIME and DATETIMEOFFSET, where Python-side per-value conversions are eliminated entirely. Lower memory usage—a column of one million integers is now a single contiguous C array, not a million individual Python objects. Seamless interoperability—Arrow-native tools can ingest this data with zero copying. For example, a Polars pipeline reading from mssql-python never materializes intermediate Python objects at any stage. This is a foundational improvement for any high-throughput data processing pipeline that touches SQL Server.

5. How mssql-python’s Columnar Fetch Works

Under the hood, mssql-python now offers a dedicated execution path that fetches rows and writes them directly into Arrow arrays—no Python wrapper objects, no list of tuples. The driver manages the Arrow buffers in C++, and when the Python caller requests the result set, it receives a pyarrow.RecordBatch or similar structure. Subsequent operations—filters, joins, aggregations—also work in-place on those same buffers, thanks to Arrow’s zero-copy semantics. This means you can chain operations like mssql_connect.execute("SELECT * FROM huge_table").fetch_arrow_table() and then pass the result to Polars or DuckDB without ever converting formats. It’s a true end-to-end Arrow-native pipeline.

6. Who Built It and What’s Next

This feature was contributed by community developer Felix Graßl (@ffelixg), and it’s now shipped in mssql-python for everyone to use. The open-source ecosystem around SQL Server and Python just got a major upgrade. Going forward, expect further optimizations: even more data types mapped to Arrow equivalents, better handling of large objects, and deeper integration with Polars and DuckDB for truly zero-copy analytics. If you’re building data-intensive applications with SQL Server, this is the feature you’ve been waiting for. Check out the mssql-python GitHub repository for documentation and examples.

Final thoughts: The addition of Apache Arrow support in mssql-python marks a significant leap forward for data engineers and scientists who rely on SQL Server. By eliminating Python object overhead and enabling zero-copy data exchange, this feature slashes memory usage and accelerates workflows. Whether you’re a Polars aficionado, a Pandas loyalist, or a DuckDB enthusiast, now you can fetch SQL Server data at near-native speeds—and keep the good times rolling through your entire pipeline. Give it a try and see the difference.

Related Articles

- Navigating Uncertainty in Local Election Forecasts: A Scenario Modelling Approach

- Real-Time Hallucination Correction in RAG: Building a Self-Healing Reasoning Layer

- Navigating Election Forecasting: Why Uncertainty Often Outweighs the Shock

- Meta's AI Swarm Maps 'Tribal Knowledge' in Massive Codebase, Slashes Errors by 40%

- The Quiet Superiority of a 2021 Quantization Method Over Its 2026 Counterpart

- NeuralBench: The New Standard for Benchmarking Brain-AI Models

- 10 Proven Strategies to Eliminate RAG Hallucinations with a Self-Healing Layer

- The Unseen Force That Makes Old Buildings Feel So Unsettling