Building a Year-in-Review Feature: The Tech Behind Spotify Wrapped 2025

Overview

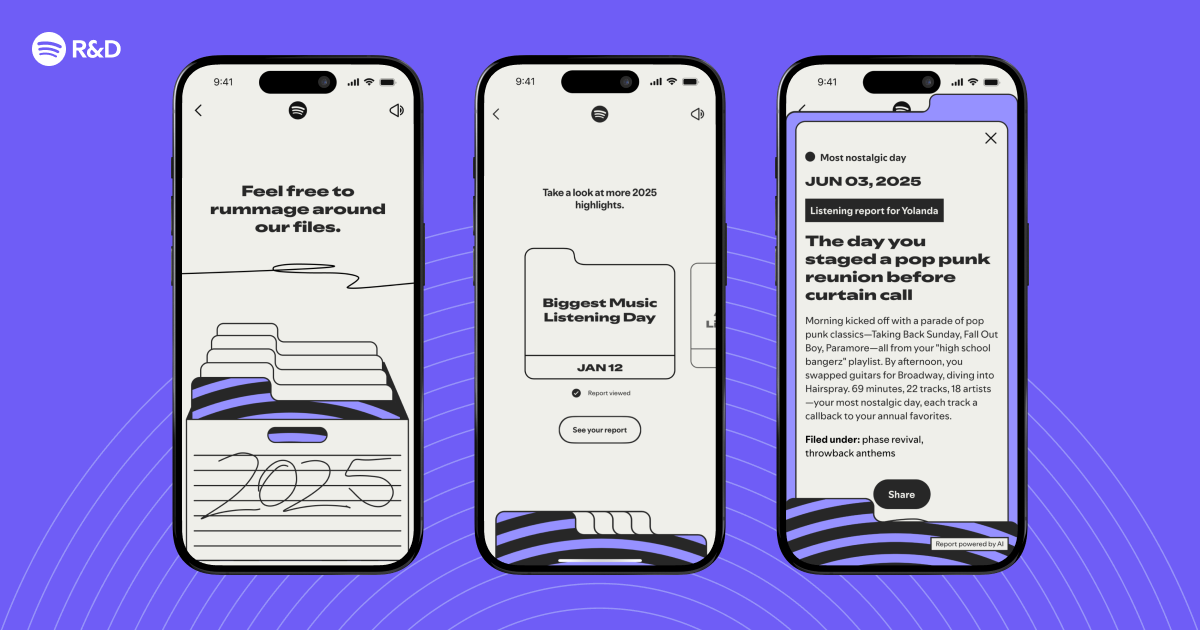

Every December, millions of Spotify users eagerly unlock their Wrapped—a personalized recap of their listening habits. But behind that sleek, shareable story lies a complex pipeline of data ingestion, machine learning, and narrative generation. This tutorial walks you through the technical architecture we built for Wrapped 2025, from raw streaming logs to the final interactive highlight reel. By the end, you’ll understand how to extract meaningful moments from unstructured user activity and package them into a compelling narrative.

Prerequisites

Tools & Technologies

- Programming language: Python 3.10+ (or Scala for Spark)

- Data processing: Apache Spark or Google Dataflow

- Storage: Cloud storage (AWS S3, GCS) + an OLAP database (e.g., BigQuery, Redshift)

- Machine learning: TensorFlow / PyTorch for embeddings, scikit-learn for clustering

- Narrative engine: Python NLG (e.g., NLG-with-markov, or GPT‑3.5 with careful prompt engineering)

- Frontend: React or Vue.js with Canvas/WebGL for data visualizations

Skills

- Familiarity with batch/stream processing concepts

- Basic understanding of recommender systems and embeddings

- Experience handling user-sensitive data under privacy constraints

Step‑by‑Step Instructions

1. Data Ingestion & Storage

Every stream event—play, pause, skip, like, share—is logged in real time. For Wrapped we aggregate three months of data into a compressed time window. Create a pipeline that reads from Kafka topics and writes Parquet files partitioned by date and user hash.

# Pseudo‑Spark example

stream_events = spark.readStream.format("kafka") \

.option("kafka.bootstrap.servers", "...") \

.load()

parsed = stream_events.selectExpr("CAST(value AS STRING)")

query = parsed.writeStream \

.format("parquet") \

.option("path", "s3://wrapped-data/streams/") \

.option("checkpointLocation", "/checkpoints/") \

.start()

query.awaitTermination()Store the final, de‑duplicated dataset in an OLAP table: user_hash, track_id, ts, action, device. We use a 1% sample for model development to avoid early bias.

2. Feature Extraction

Raw events become features. For each user we compute:

- Top 100 tracks by play count (with listening time weighting)

- Genre distribution from track metadata

- Session patterns (morning vs. night, weekday vs. weekend)

- Listening bursts — hours with >3x the user’s average plays

We also generate track embeddings using a collaborative filtering model (e.g., LightFM) to represent each user’s musical taste in a latent space.

# Example embedding generation (pseudo)

model = LightFM(no_components=32, loss='warp')

model.fit(train_interactions, epochs=10)

user_embeddings = model.user_embeddings

item_embeddings = model.item_embeddings3. Identifying Memorable Moments

3.1 Anomaly Detection

We look for statistical outliers in listening behavior: a sudden change in genre, a track replayed 50 times in one day, or a listening session that lasted past 3 AM. Using a rolling z‑score (window=7 days), we flag events where |z| > 2.5. These become candidate “moments”.

3.2 Story archetype matching

Each moment is classified into a story template: “Your guilty pleasure”, “The song that got you through”, “New obsession”. We train a simple CNN on labeled historical data (users manually tagged moments in a 2024 pilot). The CNN takes the moment’s feature vector and outputs a story class.

model = Sequential([

Dense(64, activation='relu', input_shape=(128,)),

Dropout(0.3),

Dense(5, activation='softmax') # 5 archetypes

])

model.compile(loss='categorical_crossentropy', optimizer='adam')3.3 Ranking with Recency & Diversity

We rank moments using a weighted sum of anomaly score, recency (closer to end of year gets weight 1.5), and novelty (embedding distance from the user’s average vector). The top 7 moments are selected.

4. Narrative Generation

With moments selected, we need to turn them into sentences. We use a template‑based NLG system that fills slots with extracted data. For example:

“You listened to on repeat— times in just one day. Was it the beat or the lyrics?”

We also introduce a large language model (LLM) to freshen up templates. Prompts are strictly structured to avoid hallucination:

prompt = f"""Given these facts: {facts}

Write one engaging sentence about the listening moment.

Do not add information not present in the facts."""The output is then reviewed by a validation stage that checks for factual consistency (play count matches logged data).

5. Visualisation & Interactive Story

The front‑end fetches a JSON payload from our API endpoint. Each moment is a slide with data visualisations (radar chart for genres, bar chart for top artists). We use D3.js for custom charts and a custom WebGL component for the “listening aura” that pulses to the rhythm of the user’s most‑played song.

// React component snippet

const StorySlide = ({ moment }) => {

return (

{moment.title}

{moment.narrative}

Common Mistakes

❌ Using raw play counts without weighting

Not all plays are equal. A 30‑second skip should count less than a full listen. We apply exponential decay: weight = min(1, time_played / track_duration). Without it, “accidental plays” inflate popularity.

❌ Ignoring user privacy from the start

Wrapped is opt‑in, but even with consent, never store raw user identifiers. We hash user IDs with a year‑specific salt to prevent re‑identification. Data retention is strictly limited to 90 days post‑event.

❌ Over‑engineering the narrative

It’s tempting to use a full LLM for every sentence, but costs and latency explode. Pre‑generated templates paired with a light ML classifier give 90% of the quality at 1/10th the cost. Reserve LLMs only for the “surprise” moment that needs a creative twist.

❌ Not testing on edge cases

A user with zero anomalous events will still get a Wrapped—show their consistent favorites. If the anomaly detector returns <2 moments, fall back to “Top tracks with a twist” (e.g., “You listened to Bohemian Rhapsody at 3 AM—classic.”).

Summary

Spotify Wrapped 2025 is the result of a carefully orchestrated data pipeline: streaming events → feature extraction → anomaly detection → story classification → narrative generation → interactive front‑end. The key lessons are weighting listens, respecting privacy, and balancing automation with human‑crafted templates. With these components you can build your own year‑in‑review feature—whether for music, podcasts, or any digital activity. Start small, validate with a pilot, then scale.

Related Articles

- Rediscovering Creativity with the Cricut Joy 2: A Q&A Exploration

- Transform Your Old Phone into a Car Dashboard Display in Minutes

- How to Watch and Appreciate Copenhagen Cowboy: A Viewer's Guide

- Unveiling the Magic: How Spotify’s 2025 Wrapped Curates Your Year in Music

- Walmart’s Onn Google TV 4K Streaming Stick: The Affordable Chromecast Alternative That Delivers

- 5 Key Things About Amazon Prime Video's New Clips Feed: A Vertical Short-Form Video Experience

- 10 Things You Need to Know About Apple's F1 Ambitions: From Streaming to Sequels

- Disney+ Evolves into a Super App: Beyond Streaming – Inside Disney's Bold Platform Strategy